Even though AI has been around for a long time, ChatGPT made it super popular. This means AI is getting more and more important, and we’re learning new things about it all the time. But because this big growth is recent, there aren’t many rules about it yet, even though people want some.

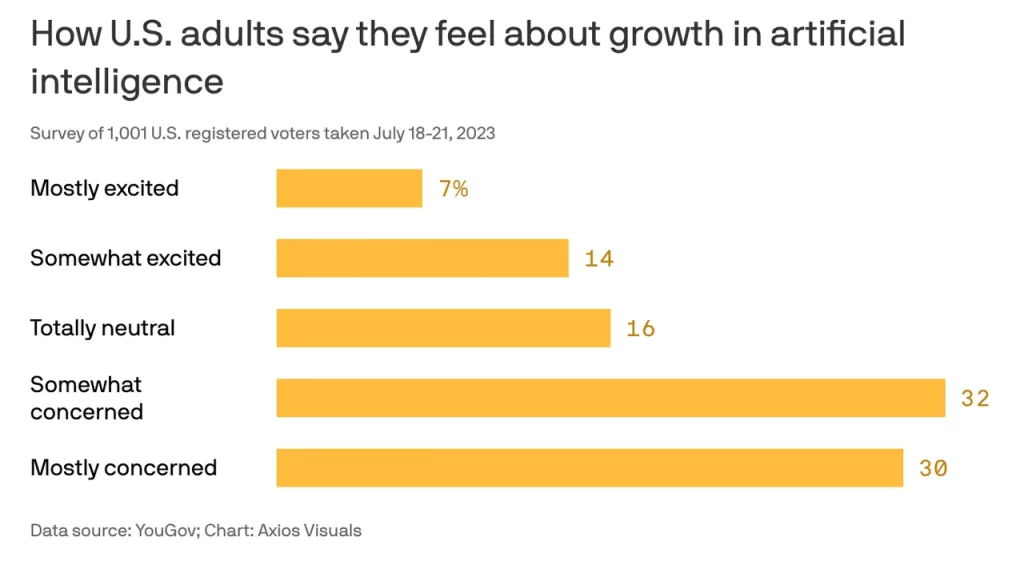

A group called the Artificial Intelligence Policy Institute asked 1,001 people in the US about what they think about AI. They found out that many people are scared of it.

Out of the people they asked, 62% said they’re a bit or a lot worried about AI. And a big 86% think AI might accidentally cause something really bad to happen.

Because so many people are worried, they want to be careful. They think AI should slow down a bit and have some rules to follow.

But people don’t just want anyone making the rules. 56% of them want a special government group to make the rules. And a huge 82% don’t trust the big bosses of tech companies to make the rules for AI.

When it comes to making AI, 72% of the people want it to go slower, while only 8% want it to go faster.

The things people are saying in this poll are similar to what experts have been saying. Some important people in tech, like Sam Altman from OpenAI, Elon Musk from Tesla, Geoffrey Hinton (who’s like a big AI expert), and even President Joe Biden, have all talked about how AI can be dangerous. Some of them even want rules for AI, like making a group kind of like the one that looks after atomic energy. The rules they make in the next year are really important because they’ll set the boundaries and how things should work for AI in the future.